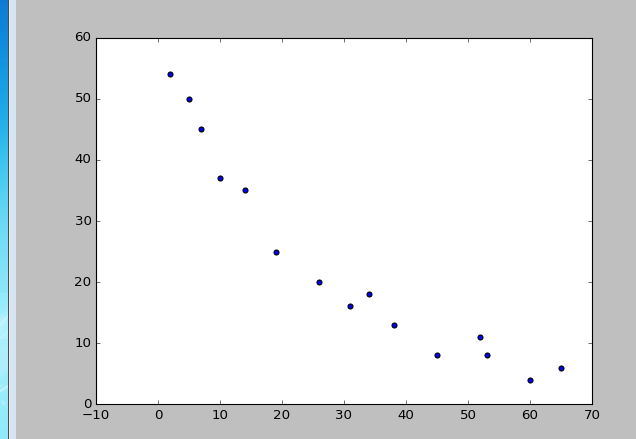

data2 是关于重伤病人的一些基本资料。自变量X是病人的住院天数,因变量Y是病人出院后长期恢复的预后指数,指数数值越大表示预后结局越好。

尝试对数据拟合合适的线性或非线性模型

过程:

1、通过散点图可以判断可能可以使用的模型有:线性回归,对数,指数和冥指数回归

# -*- coding: utf-8 -*-

import pandas as pd

from sklearn.linear_model import LogisticRegression as LR

from sklearn.linear_model import RandomizedLogisticRegression as RLR

import matplotlib.pyplot as plt

from sklearn import metrics

data2=pd.read_table(r'C:/Users/Administrator/Desktop/data2.txt',sep='\s+',

encoding='gbk',usecols=(1,2))

# plt.scatter(data2['X'],data2['Y'])

# plt.show()

通过散点图可以判断可能可以使用的模型有:线性回归,对数,指数和冥指数回归

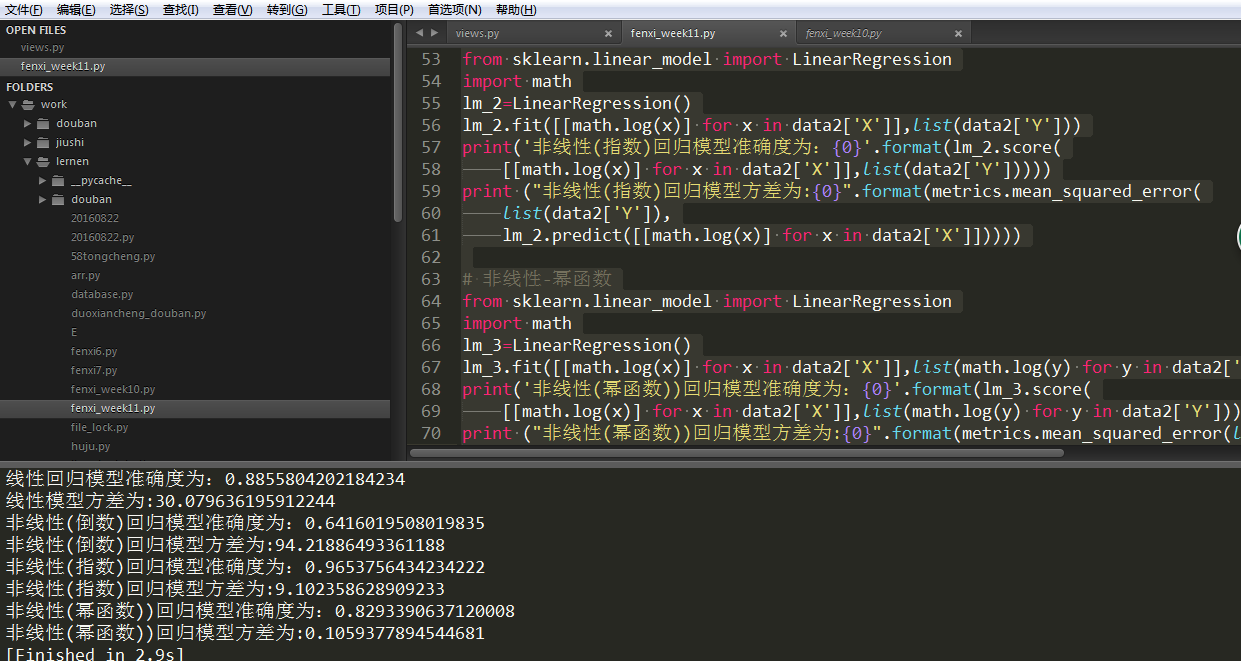

2、分别回归结果如下:

# 线性回归

from sklearn.linear_model import LinearRegression

lm=LinearRegression()

lm.fit([[x] for x in data2['X']],list(data2['Y']))

print('线性回归模型准确度为:{0}'.format(lm.score(

[[x] for x in data2['X']],list(data2['Y']))))

print ("线性模型方差为:{0}".format(metrics.mean_squared_error(

list(data2['Y']),

lm.predict([[x] for x in data2['X']]))))

# 非线性-倒数

from sklearn.linear_model import LinearRegression

lm_1=LinearRegression()

lm_1.fit([[1/x] for x in data2['X']],list(data2['Y']))

print('非线性(倒数)回归模型准确度为:{0}'.format(lm_1.score(

[[1/x] for x in data2['X']],list(data2['Y']))))

print ("非线性(倒数)回归模型方差为:{0}".format(metrics.mean_squared_error(

list(data2['Y']),

lm_1.predict([[1/x] for x in data2['X']]))))

# 非线性-指数

from sklearn.linear_model import LinearRegression

import math

lm_2=LinearRegression()

lm_2.fit([[math.log(x)] for x in data2['X']],list(data2['Y']))

print('非线性(指数)回归模型准确度为:{0}'.format(lm_2.score(

[[math.log(x)] for x in data2['X']],list(data2['Y']))))

print ("非线性(指数)回归模型方差为:{0}".format(metrics.mean_squared_error(

list(data2['Y']),

lm_2.predict([[math.log(x)] for x in data2['X']]))))

# 非线性-幂函数

from sklearn.linear_model import LinearRegression

import math

lm_3=LinearRegression()

lm_3.fit([[math.log(x)] for x in data2['X']],list(math.log(y) for y in data2['Y']))

print('非线性(幂函数))回归模型准确度为:{0}'.format(lm_3.score(

[[math.log(x)] for x in data2['X']],list(math.log(y) for y in data2['Y']))))

print ("非线性(幂函数))回归模型方差为:{0}".format(metrics.mean_squared_error(list(math.log(y) for y in data2['Y']),

lm_3.predict([[math.log(x)] for x in data2['X']]))))

综合考虑准确度和方差,指数模型最优

源码:

# -*- coding: utf-8 -*-

import pandas as pd

from sklearn.linear_model import LogisticRegression as LR

from sklearn.linear_model import RandomizedLogisticRegression as RLR

import matplotlib.pyplot as plt

from sklearn import metrics

data2=pd.read_table(r'C:/Users/Administrator/Desktop/data2.txt',sep='\s+',

encoding='gbk',usecols=(1,2))

# plt.scatter(data2['X'],data2['Y'])

# plt.show()

# 线性回归

from sklearn.linear_model import LinearRegression

lm=LinearRegression()

lm.fit([[x] for x in data2['X']],list(data2['Y']))

print('线性回归模型准确度为:{0}'.format(lm.score(

[[x] for x in data2['X']],list(data2['Y']))))

print ("线性模型方差为:{0}".format(metrics.mean_squared_error(

list(data2['Y']),

lm.predict([[x] for x in data2['X']]))))

# 非线性-倒数

from sklearn.linear_model import LinearRegression

lm_1=LinearRegression()

lm_1.fit([[1/x] for x in data2['X']],list(data2['Y']))

print('非线性(倒数)回归模型准确度为:{0}'.format(lm_1.score(

[[1/x] for x in data2['X']],list(data2['Y']))))

print ("非线性(倒数)回归模型方差为:{0}".format(metrics.mean_squared_error(

list(data2['Y']),

lm_1.predict([[1/x] for x in data2['X']]))))

# 非线性-指数

from sklearn.linear_model import LinearRegression

import math

lm_2=LinearRegression()

lm_2.fit([[math.log(x)] for x in data2['X']],list(data2['Y']))

print('非线性(指数)回归模型准确度为:{0}'.format(lm_2.score(

[[math.log(x)] for x in data2['X']],list(data2['Y']))))

print ("非线性(指数)回归模型方差为:{0}".format(metrics.mean_squared_error(

list(data2['Y']),

lm_2.predict([[math.log(x)] for x in data2['X']]))))

# 非线性-幂函数

from sklearn.linear_model import LinearRegression

import math

lm_3=LinearRegression()

lm_3.fit([[math.log(x)] for x in data2['X']],list(math.log(y) for y in data2['Y']))

print('非线性(幂函数))回归模型准确度为:{0}'.format(lm_3.score(

[[math.log(x)] for x in data2['X']],list(math.log(y) for y in data2['Y']))))

print ("非线性(幂函数))回归模型方差为:{0}".format(metrics.mean_squared_error(list(math.log(y) for y in data2['Y']),

lm_3.predict([[math.log(x)] for x in data2['X']]))))